The Metrics We Measure Because We Can

But should we? Exploring the things we measure because we were told it was the way versus new community metrics for the funky new day and age we're in.

There’s a dashboard somewhere - probably open in a tab you’ve had pinned for months - showing you daily active users, posts per week, likes, replies, and maybe a “health score” that was configured by someone who no longer works at your company. You glance at it before your quarterly business review. You export it to a slide. You present the numbers with the confidence of someone who knows what they mean.

But do you? Like community manager to community manager… do you?

We’ve been measuring community engagement the same way since the forum era, and honestly, most of it made sense then… when the platform was the community, when showing up to post was the whole point, when activity volume was a reasonable proxy for value. That world still exists in some corners. But for a lot of us, the community has quietly grown into something more complex than a post count can hold. We chatted about this earlier in From Destination to Infrastructure.

So here’s the uncomfortable question: What if I forced you to strip out every default metric your platform hands you - the ones you didn’t choose, you just inherited, or some leader insisted that’s how it was done - and had to build your measurement framework from scratch? What would you actually want to know?

The most honest answer to that question is also the most useful thing you can do for your community strategy.

Historically, what we’ve reached for is traffic, membership, and engagement. How many people showed up, how many joined, how many did something while they were there. These made sense as a starting framework. They’re super visible, they’re easily trackable, and in the early days of community-building they gave you a reasonable read on momentum. A growing member count meant you were doing something right. A spike in posts meant the conversation was alive. Direct traffic told you people were coming back on purpose, not just stumbling in from a search.

The problem isn’t that these metrics are wrong. It’s that they’re incomplete in ways we’ve mostly agreed not to talk about. Membership counts don’t tell you if the right people joined or if they ever found what they came for. Engagement volume doesn’t distinguish between a genuinely helpful conversation and a three-page thread that went nowhere useful. And direct traffic… well, direct traffic just means someone knew your URL. It doesn’t mean anything worked. In fact in the day and age of AI, it might signal that the right information isn’t being ingested.

So what would actually work?

Chances are you didn’t come here to read empty musings. At least I think not. You came here for some real world nerd perspective.

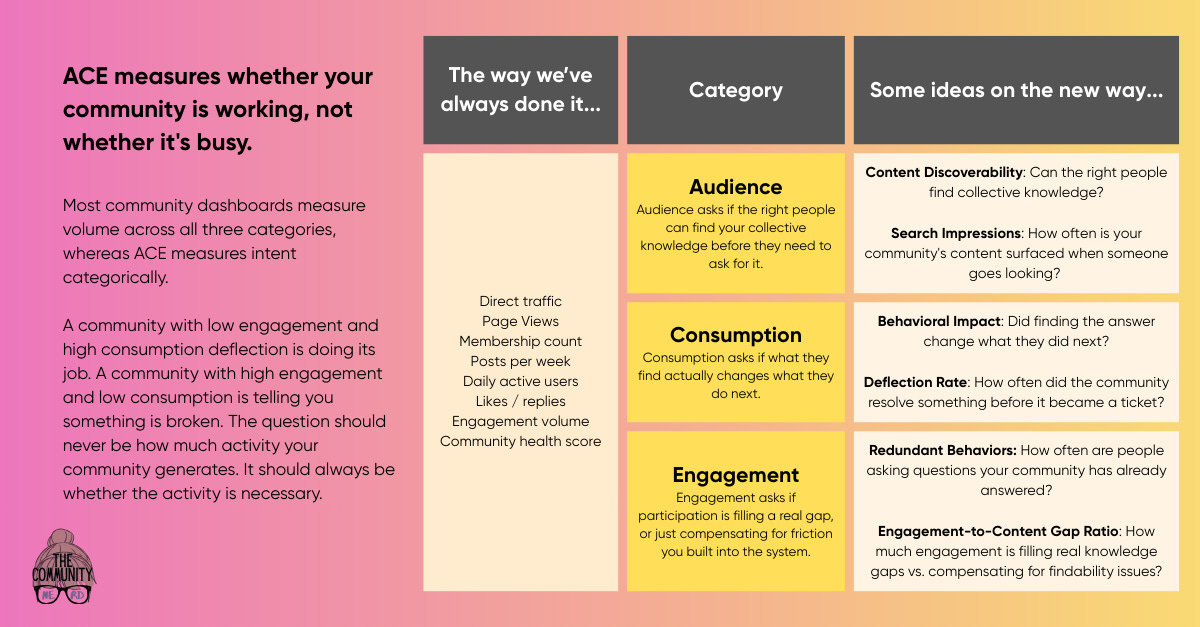

And while I don’t have all the answers, nor can I claim to have absolutely perfected an even near answer - I’ve been working on an approach I call ACE: Audience, Consumption, Engagement. It may be a bit biased towards the support communities I tend to run, but it has elements applicable to all communities.

Audience isn’t about how many members you have. (I retroactively apologize for the ferocious eye rolls I’ve given that metric when asked and the violent eye rolls I’ll continue to give it.) It’s about whether the people who need your collective knowledge can actually find it. Discovery is the metric. Can someone land on your community from a search - internal or external - and reach something useful without already knowing exactly what to look for? If your answer is “our content is all in there somewhere,” that’s not a measurement strategy. That’s a hope, a prayer, a concept of a plan.

Consumption is where things get interesting - or at least I think things get interesting (says the nerd delighted by CSV files). Did the knowledge seeker find it, and did it actually land? Did it change what they did next? The behavioral signal matters more than the view count. Someone reading a solution and then immediately raising a support ticket anyway or not following through on the intended product action is not a consumption success. And that’s a gap you need to know about. The goal shouldn’t be traffic to your content; it’s your content doing the job it was created to do.

And engagement… man, this one requires the most honesty. It should be a measure of necessity, not volume. In a well-functioning support community, engagement is what happens when the existing knowledge base doesn’t cover it. If you’re seeing high engagement, that’s not automatically a health signal. It might mean you have redundant flows, a lack of necessary knowledge, or friction that’s pushing people into creating new threads when the answer already exists three pages back. Engagement when necessary is a feature. Engagement as a substitute for findability is a bug.

None of this means you should torch your existing dashboards tomorrow. Traffic, membership, and engagement volume still tell you something useful for operational health checks. They’re just not the whole story, and we’ve spent a decade letting them stand in for one. The gap between what you’re currently measuring and what you actually care about is where your strategy is quietly leaking brain juice.

You probably won’t rebuild your entire measurement framework this quarter (unless you’re a bonafide data nerd or have one kicking around). But you could start with one honest audit: review each metric on your current dashboard and ask what decision it actually informs. If you can’t answer that cleanly, you already know what to do.

Go find out if your community is doing its job… not just whether people showed up.