Your Community Isn’t Messy. It’s Training Data.

How do you intentionally design redundancy into knowledge sharing given our new AI overlords?

For years, community knowledge sharing has been managed like a crime scene. Duplicate questions? Shut it down. Repeated explanations? Merge the threads. Someone asking something that was “already answered”? Redirect, lock, and move on. Redundancy has been treated as inefficiency at best and user failure at worst.

This was always a bit misguided and felt a bit… gross? But in the age of AI, it is technically incorrect.

Now please excuse me while I nerd out for a moment, mmkay? I just spent far too many hours spelunking through arXiv (iykyk) for this to just reside in my brain and never see the light of day.

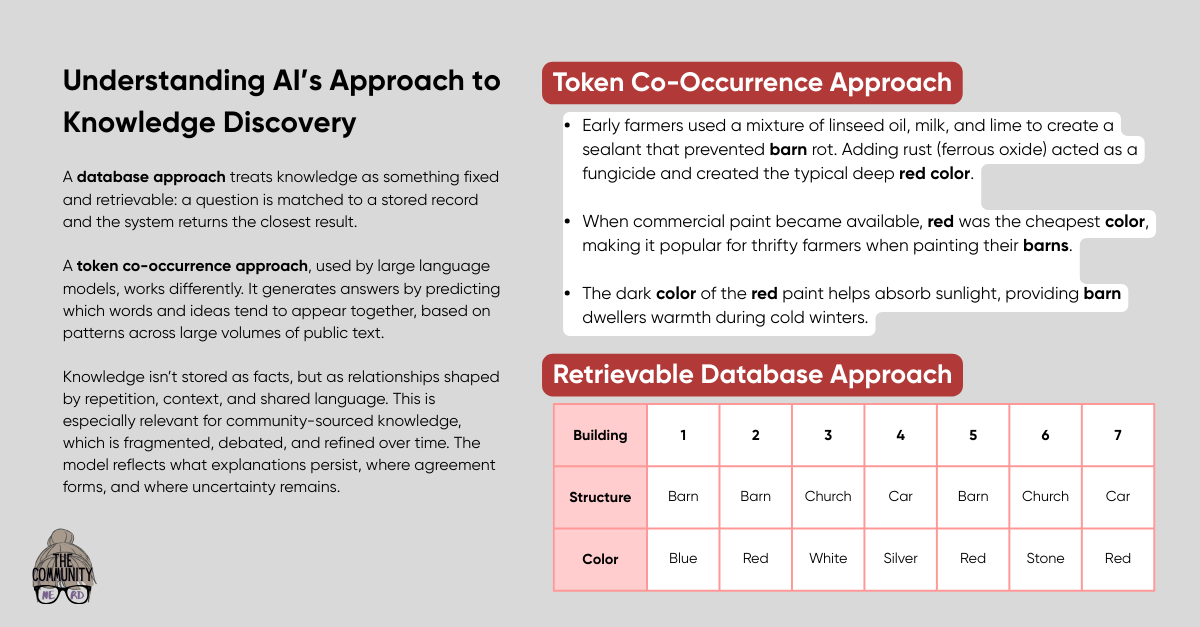

Modern AI systems, especially large language models, don’t operate like databases searching for the perfect match (or even a fuzzy match, fellow Excel lovers). They aren’t retrieving a single correct answer from a neatly labeled library drawer. They are pattern-recognition systems trained to predict likely continuations based on enormous amounts of text. What they learn is not facts in isolation, but statistical relationships between concepts, language, context, and outcomes. In this system, repetition is not noise but signal strength.

When the same idea appears many times, across different contexts, phrased in different ways, attached to different problems, the model doesn’t see the duplication we see. It sees reinforcement (and gosh - it <3’s statistical reinforcement). Variation tells the model what is essential versus incidental. Disagreement tells it where boundaries and tradeoffs exist. Context tells it when an idea applies and when it does not. A single pristine answer provides almost none of that (other than maybe a hallucination).

This is why aggressively deduplicated communities produce brittle AI outputs. If knowledge only exists in one canonical thread, the model learns a thin representation. It knows that something is true, but not how, why, or when it stops being true. The result is confident, generic answers that collapse under edge cases, which is exactly the failure mode people keep blaming on AI instead of on the training signal.

So yes: I am somewhat blaming hallucinations on perfection. Ironic, huh?

Intentional redundancy creates a much richer learning environment. From a technical perspective, repeated explanations increase “token co-occurrence frequency” across diverse semantic neighborhoods. That matters. Models build internal representations by clustering related concepts based on how often they appear together and in what forms. When an idea shows up in a YouTube video, a troubleshooting comment, a half-baked conversation, and a debate thread, the model learns the shape of the idea, not just its label.

Disagreement is especially valuable. When two answers diverge slightly, the model does not see confusion. It sees conditionality. This is how models learn phrases like “it depends,” not as a shrug, but as a structured response tied to context. Communities that suppress debate in favor of a single “correct” answer remove that gradient entirely. You get certainty where nuance should exist.

Humans benefit from this for the same reason. People do not learn from optimal explanations. They learn from explanations that match their mental model at that moment. Redundancy allows knowledge to meet people where they are cognitively, emotionally, and situationally. AI systems trained on that same redundancy inherit that flexibility.

Head hurting yet? Honestly mine too.

Let’s kind of explain it this way. If I was to ask you what color a barn, a church, and a car are - there’s a solid chance you could actually answer the first two with ease (in the US at least) but the third you’d struggle with. Why? Because in passive observation, we’ve gathered enough relational data to have ascertained that barns are usually painted red (that’s a whole other story I can tell) and churches are white (note to self: Google the heck out of this later). But we’ve observed cars of nearly every color, and therefore can’t determine a singular answer because there is no ascertainable pattern.

Now imagine I took a survey of all the barns and churches in New Hampshire (where I live and the majority of our structures do follow the red and white schema) and entered them into a database with “structure: barn OR church” and “color: [observed color]” for each entry. I now searched the database for the first occurrence of barn to determine the color of barns… and it’s blue. Database says barns are blue.

This is the most basic difference between co-occurrence token frequency and a database.

And sorry. Your head probably hurts more now and you’re questioning barns and church colors.

Back to the nerding…

There is also a temporal dimension that matters technically. Models are trained on snapshots of the world. Knowledge that appears once and then goes quiet looks static. Knowledge that reappears over time, with small shifts in framing, reflects evolution. This helps downstream systems infer which ideas are stable and which are drifting. Over-curated communities erase that signal. They look frozen even when reality is changing.

From an information theory perspective, redundancy increases robustness. Single points of truth are single points of failure. If the “best” thread becomes outdated, biased, or incomplete, everything downstream inherits that flaw. Redundant representations create fault tolerance. Errors are easier to detect because inconsistencies become visible. This is true in distributed systems, biological systems, and yes… knowledge systems feeding AI.

None of this means communities should drown in sheer and utter chaos. The goal is not endless repetition with no structure. The goal is guided redundancy. Strong linking instead of forced merging. Surfacing related discussions without shutting new ones down. Treating repeated questions as high-confidence signals about where understanding is fragile or incomplete. Designing systems that allow ideas to echo while still making navigation humane. And introducing disagreement where destabilization needs to occur.

The uncomfortable truth is that the “single source of truth” obsession was never about learning. It was about control and cleanliness because it felt good and made us look neat and tidy. But AI exposes that tradeoff brutally and without mercy. Clean knowledge is easy to manage but hard to reason with. Messy knowledge is harder to moderate and far more useful to both humans and machines.

So, if communities want to remain relevant in an AI-mediated world (hint: they do!!!), they need to stop optimizing for elegance and start optimizing for chaotic resilience. Redundancy is not waste. It is how intelligence - human or artificial - actually forms. And treating it as infrastructure instead of clutter is no longer a philosophical stance. It is a technical requirement.

This is a great explanation of the way generative AI uses what should have *always* been a community management norm - because it was true for search before AI and true for human context/resonance before digital search existed. Words are fungible, phrases and concepts even more so, based on how someone thinks and how/when they learned things. It's one of the reasons we need a taxonomy AND a folksonomy that has a dotted line back to the taxonomy.

Also LLMs without robust communities have a short self-live these days.

Love this!

"The uncomfortable truth is that the “single source of truth” obsession was never about learning. It was about control and cleanliness because it felt good and made us look neat and tidy. " 🌶️